|

"In the future, we hope to use our work to enable high performance algorithms that combine modalities in one model and we plan to study how susceptible they are to adversarial attacks." "We have not specifically analyzed how our models will react to adversarial examples but since our current models are trained separately for each modality, we believe that existing research on adversarial attack analysis for each modality would be applicable to our work as well," Baevski said. It's unclear, however, if data2vec suffers from similar weaknesses. OpenAI's CLIP model, for example, trained on images and text will identify an image of an apple incorrectly as an iPod if the word "iPod" is in the picture. Previous research on multi-modal systems have shown they can be prone to easy adversarial attacks.

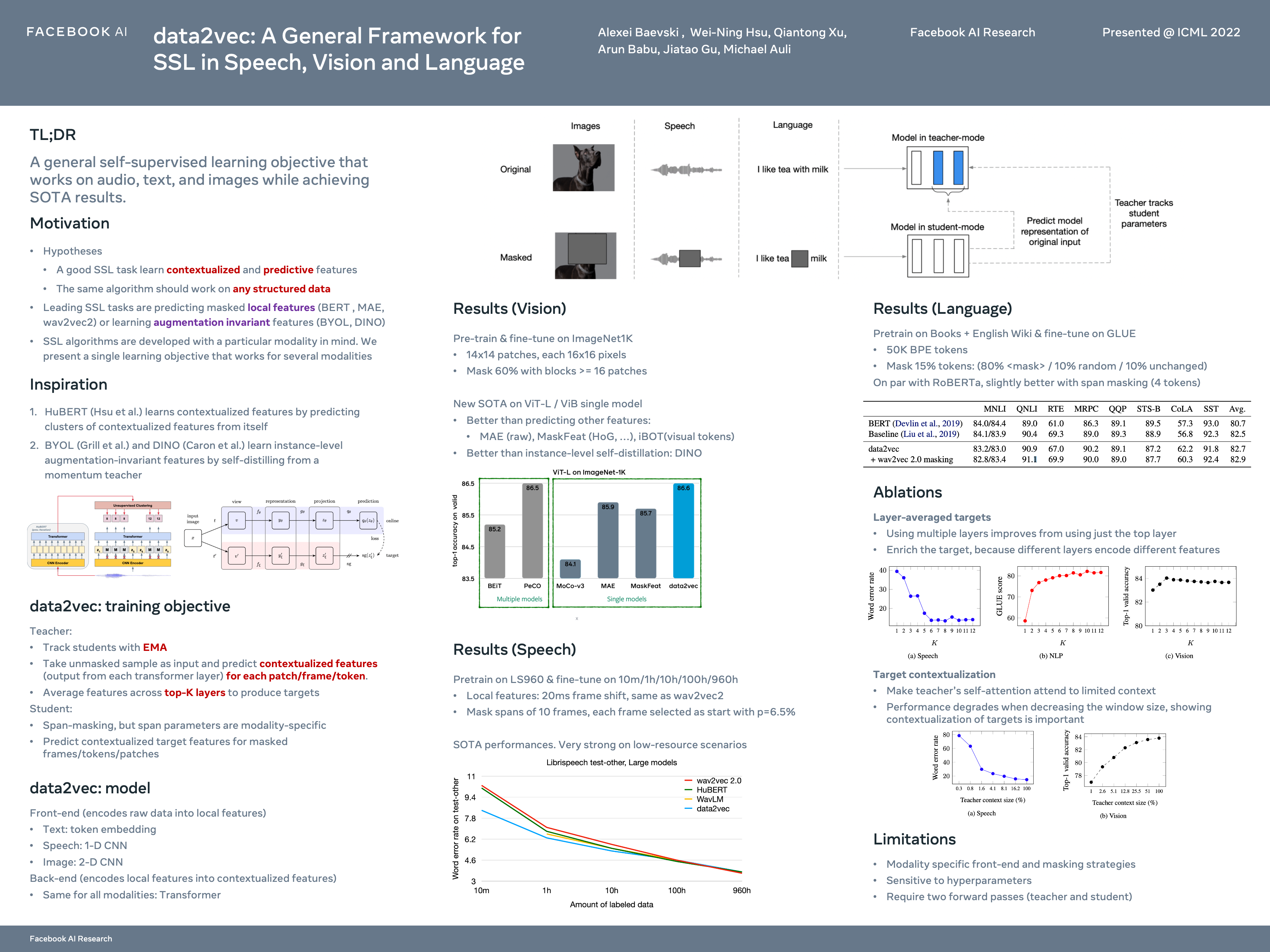

Then, when you are cooking in a kitchen wearing your AR glasses that have access to this model, it’s able to overlay visual cues for what you need to do next, point out potential mistakes, or explain how adding a particular ingredient will affect the taste of your dish," he told us. "Imagine having a model that has been trained on recordings of thousands of hours of cooking activity from various restaurants and chefs. He referred back to the idea of AR glasses helping wearers cook. The algorithms that currently try to combine multi-modal information exist but they do not yet perform well enough to replace specialized algorithms and we hope our work will help change that."īaevski said in the future multi-modal systems could incorporate a larger range of data to model concepts like smell, 3D objects, or videos. Different modalities can add additional information to the same piece of content - for example body language from video, prosodic information from audio, and text can combine into a richer representation of a dialog. "We hope that it will enable future work to build high performing self-supervised models that combine modalities and are more effective than specialized models. "We train separate models for each modality but the process through which the models learn is identical," Alexei Baevski, a research engineer at Meta AI told The Register. Specs appeal: Qualcomm and Meta insist headgear to plug you into the metaverse will 'supersede mobile'. /filters:no_upscale()/news/2022/12/meta-data2vec-release/en/resources/1318807517_927742464857899_4459922671946972048_n-1672147848703.jpg)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed